The Large Hadron Collider at CERN, the European Organization for Nuclear Research, finally smashed two beams of protons together at high speed on Tuesday after a couple of failed starts.

CERN was trying to get the two proton beams to collide at an energy level of 7 trillion electron volts (TeV), but problems with the electrical system forced the scientists to reset the system and try again.

The experiment succeeded shortly after 1 p.m. central European summer time.

CERN will run the collider for 18 to 24 months.

Do the Proton Mash

The experiment had two proton beams traveling at more than 99.9 percent of the speed of light to collide, creating showers of new particles for physicists to study.

Each beam consisted of nearly 3,000 bunches of up to 100 billion particles each. The particles are tiny, so the chances of any two colliding are small. There will only be about 20 collisions among 200 billion particles, CERN said.

However, the continuous streaming of the beams into each other had bunches colliding about 30 million times a second, resulting in up to 600 million collisions a second.

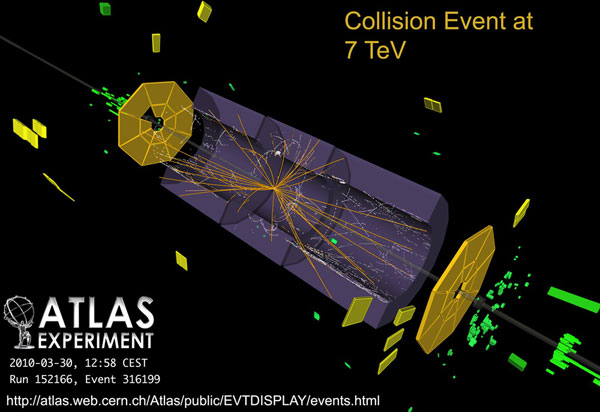

The experiment ran for more than three hours and recorded half a million events, according to a tweet from CERN. Four huge detectors -- Alice, Atlas, CMS and LHCb -- observed the collisions and gathered data.

Physicists worldwide are examining the results. They're looking to solve the origin of mass, the grand unification of forces and the presence of dark matter in the universe.

Alice and the Kids

Alice will study the quark-gluon plasma, a state of matter that probably existed in the first moments of the universe. Quarks and gluons are the basic building blocks of matter. A quark-gluon plasma, also known as "quark soup," consists of almost free quarks and gluons. It exists at extremely high temperatures or extremely high densities or a combination of both.

CERN first tried to create quark-gluon plasma in the 1980s, and the results led it to announce indirect evidence for a new state of matter in 2000. The Brookhaven National Laboratory's Relativistic Heavy Collider is also working on creating quark-gluon plasma, and scientists there claim to have created the plasma at a temperature of about 4 million degrees Celsius. CERN's experiment with the LHC is a continuation of the work on this subject.

Atlas, the largest detector at the LHC, is a multipurpose detector that will help study fundamental questions such as the origin of mass and the nature of the Universe's dark matter.

CMS is the heaviest detector at the LHC. It is a multipurpose detector consisting of several systems built around a powerful superconducting magnet.

LCHb will help scientists find out why the universe is all matter and contains almost no antimatter.

How LHC Works

The Large Hadron Collider is simply a machine for concentrating energy into a relatively small space.

In Tuesday's experiment, two proton beams were smashed together at a total energy level of 7 TeVs, the highest energies ever observed in laboratory conditions.

Here's how the collider works: After the beams are accelerated to 0.45 TeV in CERN's accelerator chain, they are injected into the LHC ring, where they'll make millions of circuits. On each circuit, the beams will get an energy impulse from an electric field. This will go on until they hit the 7 TeV level.

For some perspective on that 7 TeV figure, 1 TeV is roughly the amount of energy a flying mosquito puts out. Not much -- but then a proton is about a trillion times smaller than a mosquito.

At full power, each beam will have as much energy as a car traveling at about 995 miles per hour.

Some people feared the LHC would generate small black holes that might have some impact on the universe. Not to worry, Joseph Lykken, a particle physicist at the Fermi National Accelerator Laboratory in Batavia, Ill., told TechNewsWorld.

"It is indeed possible that LHC collisions could produce microscopic black holes, but these would be rare events, if they happened at all," he explained. "At most, we'd have one tiny black hole at a time, and each of these should be highly unstable."

If black holes could be produced and studied this way, it would revolutionize our understanding of space, time and gravity, Lykken pointed out.

Lykken, who's involved in the CMS experiments at CERN, said that detector was recording about 100 collisions per second, more than expected.

The CMS analysis teams have begun posting the first results from that data, he said.

Keeping the LHC Running

CERN will run the LHC for 18 to 24 months to get enough data to make significant advances throughout the science of physics. Once the scientists have discovered the Standard Model particles, they'll begin looking for the elusive Higgs boson.

The Standard Model of particle physics is a theory of three of the four known fundamental interactions and the elementary particles that take part in these interactions. These particles make up all visible matter in the universe. They are quarks, leptons and gauge bosons.

"This is only the beginning of a discovery process that will take months and years, as thousands of physicists analyze the debris from billions of collisions," Lykken said.

"The discovery of the Higgs boson will most likely take several years, but other discoveries could happen much sooner," he added.

"There's a lot of preliminary data that we'll be getting that will be useful in the sense that we can check predictions made about cross sections and multiplicities and charge particle production," said Brandeis University physics professor James Bensinger, who participated in the development of the Atlas Experiment on the LHC.

The search for the Higgs boson will probably have to wait until the next run, Bensinger told TechNewsWorld. The LHC will probably run for another 20 to 30 years, and will have cycles where it will run for two years and be shut off for the third for tweaking and maintenance.

America, Where Are You Now?

The United States had a supercollider project of its own under way, but the project was killed when the House of Representatives canceled funding for it in 1993.

By that time, 14 miles of tunnels had been dug and US$2 billion spent on it.

The laboratory site is close to the town of Waxahachie, Texas, south of Dallas. The site has been sold to an investment group that plans to turn it into a secure data storage center.

It's not likely that this supercollider project will be revived, Bensinger said.

"The LHC could probably do what that supercollider could do anyway," he pointed out.

If major discoveries result from the LHC experiment, however, U.S. scientists may be able to make the case for a new generation of colliders to explore new avenues of research, he suggested.

"The U.S. has a strong research and development program in place that could allow us to build a future supercollider using radically new technologies," Lykken pointed out. "It remains to be seen whether we have the political will to compete with the Europeans, who have devoted $10 billion to the successful LHC project."

By Richard Adhikari

From technewsworld.com